AI in Skills Assessment: Transforming Tech Hiring

Hiring for high-growth tech teams often means sorting through countless resumes without clear evidence of real skills. This challenge matters because every bad hire costs your startup time, money, and momentum. AI-powered skills assessments use advanced machine learning and natural language processing to deliver instant, personalized insights, helping you identify top talent based on actual ability rather than educated guesses.

Table of Contents

Key Takeaways

| Point | Details |

| AI-Powered Assessments Are Efficient | AI assessments evaluate candidates based on actual skills and performance data, reducing the time spent on traditional hiring methods. |

| Customizable Evaluation Paths Enhance Engagement | AI frameworks provide tailored assessments, adapting to individual candidates and focusing on their unique strengths and weaknesses. |

| Real-Time Feedback Facilitates Growth | Candidates receive immediate insights during assessments, fostering a learning environment that encourages development. |

| Bias Mitigation is Crucial | Ensuring data fairness and conducting bias audits are essential to avoid discrimination and legal issues in AI assessments. |

AI-Powered Skills Assessment Explained

AI-powered skills assessment uses machine learning and natural language processing to evaluate candidate abilities faster and more accurately than traditional methods. Instead of relying solely on resumes or gut feelings, these systems analyze real performance data to measure what candidates actually know.

Here’s what makes AI assessment different from standard testing:

-

Adaptive difficulty: Questions adjust based on candidate responses, preventing boredom or overwhelming frustration

-

Real-time feedback: Candidates get instant insights about strengths and gaps instead of waiting weeks for results

-

Customized evaluation paths: Each assessment follows a unique route tailored to the candidate’s background and role requirements

-

Pattern recognition: AI spots skills indicators humans might miss, like problem-solving approach or communication style

-

Objective scoring: Removes human bias from evaluation, making hiring decisions more fair and consistent

Think of it like a conversation between the system and the candidate rather than a one-size-fits-all test. Personalized assessment frameworks leverage advanced AI techniques to deliver customized feedback and adaptive learning pathways that enhance engagement and reveal true capability.

How the Technology Works

When a candidate takes an AI-powered assessment, several things happen simultaneously. The system analyzes their answers, response time, and problem-solving approach to build a profile of their actual skills.

The technology processes data through multiple layers:

-

Parse candidate responses and extract key information

-

Compare responses against skill benchmarks for the specific role

-

Identify patterns in how the candidate thinks and works

-

Generate real-time difficulty adjustments based on performance

-

Compile comprehensive skill gaps and strength analysis

AI assessments reveal what candidates can actually do, not just what they claim on a resume.

This approach works especially well for tech roles where skills matter more than pedigree. A candidate might have no formal degree but possess intermediate Python proficiency and strong systems thinking. Your AI assessment captures that reality.

Why This Matters for Your Hiring

You’re looking to hire quickly without sacrificing quality. AI assessment accelerates this timeline significantly. Instead of spending hours reviewing portfolios or conducting initial technical screens, the system pre-qualifies candidates automatically.

You get concrete data on:

-

Actual technical proficiency levels in languages or frameworks you care about

-

Problem-solving methodology and thinking patterns

-

Communication clarity when explaining technical concepts

-

Learning speed and adaptability indicators

-

Role-specific capability readiness

For startup environments where hiring managers wear multiple hats, this automation frees you to focus on culture fit and team dynamics while AI handles capability verification.

Pro tip: Set assessment difficulty to match your actual role requirements, not hypothetical “ideal” candidates—this reduces false positives and gets you candidates who can genuinely contribute from day one.

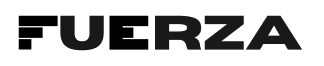

Major Types of AI Skills Evaluation Methods

AI doesn’t use one-size-fits-all approach to skills assessment. Different evaluation methods target different aspects of candidate capability, and knowing which ones exist helps you pick the right tools for your team’s needs.

The main categories break down like this:

-

Automated assessment tools: Systems that score tests, coding challenges, and technical exercises without human intervention

-

Personalized recommendation systems: AI that suggests skill development paths based on individual performance data

-

Adaptive tutoring systems: Interactive platforms that adjust difficulty and content in real time as candidates work

-

Feedback generation systems: Tools that provide instant, detailed insights on performance and improvement areas

-

Predictive analytics: Algorithms that forecast which candidates will succeed in specific roles

Each method serves a different purpose in your hiring funnel. The combination you choose depends on the role complexity and how much candidate guidance you want to provide.

Here’s a summary of the main AI evaluation methods and when each is most effective:

| Evaluation Method | Best Use Case | Main Benefit |

| Automated assessment tools | Technical screenings for developers | Fast, objective scoring |

| Adaptive tutoring systems | Identifying growth potential | Personalized learning |

| Predictive analytics | Forecasting long-term job fit | Reduced hiring risk |

| Feedback generation systems | Improving candidate experience | Actionable insights |

| Recommendation systems | Tailoring development plans | Career path suggestions |

Assessment and Evaluation Tools

These are the workhorses of technical hiring. They automatically score coding problems, system design questions, and domain-specific challenges. AI-based assessment tools evaluate cognitive and skills learning outcomes by processing responses through machine learning algorithms that compare performance against role benchmarks.

What they measure:

-

Technical accuracy and correctness

-

Code quality and best practices adherence

-

Problem-solving efficiency and approach

-

Completeness of solutions

-

Time management during assessments

These systems eliminate the need to manually review dozens of coding submissions. A candidate completes a challenge, the AI scores it instantly, and you see a numerical skill rating and detailed breakdown of strengths and weaknesses.

Real-Time Feedback and Personalization

Modern AI doesn’t just score—it teaches. Generative AI assessment methods generate detailed feedback using natural language processing to explain exactly where candidates struggled and how to improve.

This transforms assessments from judgment tools into learning opportunities. A candidate submits a solution, the system explains what worked and what didn’t, then recommends skill areas to focus on. This approach particularly appeals to candidates who want growth, not just rejection.

Candidates who receive detailed feedback during assessment are more likely to stay engaged with your hiring process and join your team.

For your startup, this builds goodwill even with candidates you don’t hire. They see you invest in their development, which strengthens your employer brand.

Why This Matters

Different roles need different evaluation approaches. A backend engineering role might emphasize algorithmic thinking and system design. A frontend role might weight UI/UX judgment and responsiveness skills differently.

Choosing the right method means:

-

Saving hours on manual technical reviews

-

Getting consistent, bias-free scores across all candidates

-

Identifying skill gaps you can address during onboarding

-

Reducing false positives who look good on paper but struggle in practice

Pro tip: Combine multiple evaluation methods—use coding assessments for technical skills, real-time feedback systems for communication clarity, and predictive analytics to forecast role fit—to get the complete candidate picture before the first interview.

How AI Enhances Predictive Hiring Analytics

Predictive hiring analytics sounds complex, but it solves a real problem you face every day: figuring out which candidates will actually succeed in your role before they start. AI cuts through the guesswork by analyzing patterns in past hires to forecast future performance.

Traditional hiring relies on intuition. You read a resume, conduct an interview, and make a bet on someone. AI takes the data from thousands of hiring decisions and identifies what actually correlates with success. The difference is measurable and significant.

What Predictive Analytics Actually Does

Machine learning algorithms automate resume parsing, interview scheduling, and performance assessment while forecasting candidate success and job fit through predictive models. This isn’t about replacing your judgment—it’s about giving you better information before you make that judgment.

Here’s what the system analyzes:

-

Past hire performance data: Comparing successful employees against unsuccessful ones to find patterns

-

Skill-to-role mapping: Identifying which technical abilities predict success in specific positions

-

Retention indicators: Flagging candidates likely to stay versus those who typically leave within months

-

Team fit signals: Measuring cultural and working-style compatibility with existing team members

-

Growth trajectory: Predicting whether candidates can advance into more senior roles

The AI builds a profile of your “ideal” hire based on actual outcomes, not what you think the ideal hire looks like.

How This Reduces Bias and Improves Decisions

Humans have blind spots. You might unconsciously favor candidates who went to specific schools or have certain names. AI doesn’t carry those biases. It evaluates resume gaps, skill progression, and role-specific indicators objectively.

This creates better diversity outcomes naturally. When you remove gut-feeling judgment, you get candidates from different backgrounds who meet the same capability standards. Your startup gets stronger because diverse teams solve problems differently.

Predictive analytics removes emotional decision-making from hiring, letting capabilities and fit data drive your choices.

For hiring managers juggling multiple positions, AI provides a confidence score on each candidate. You know immediately which candidates score highest on success metrics specific to your role, saving hours of comparison work.

Real Impact on Your Hiring Process

Machine learning techniques like gradient boosting and random forest models identify which candidate characteristics predict employee attrition and performance. You can now make data-backed decisions on candidate potential rather than betting on credentials.

What changes in practice:

-

Faster candidate screening with confidence scores

-

Better talent matching to specific role requirements

-

Reduced false positives who look good initially but underperform

-

Earlier identification of flight risks

-

Stronger hiring patterns that compound over time

Your next hire gets better informed by every previous hire you’ve made. That’s compounding advantage.

Pro tip: Track your actual hiring outcomes for 6-12 months, then feed that data back into your predictive model—the more real performance data the system analyzes, the more accurate its forecasts become for future candidates.

Risks, Bias, and Legal Issues in AI Assessments

AI assessments sound objective, but they’re only as fair as the data feeding them. Biased training data creates biased hiring decisions, and that opens you to legal exposure. This isn’t theoretical—it’s happening to companies right now.

You need to understand the risks before deploying any AI hiring system. The stakes are higher than you might think.

Where Bias Hides in AI Systems

Bias doesn’t always look obvious. It creeps in through seemingly innocent data patterns. Algorithmic bias takes multiple forms including social bias reflecting historical discrimination, measurement bias from flawed data collection, representation bias when training data lacks diversity, evaluation bias in how results are assessed, and deployment bias in how systems are actually used.

Here’s how this shows up in hiring:

-

Historical data bias: Your AI learns from past hires. If you’ve historically hired more men for engineering, the system recommends men more often.

-

Proxy discrimination: The AI notices correlations (zip code, university, hobby) that actually correlate with protected characteristics.

-

Incomplete skill measurement: The system only measures technical skills but misses communication or leadership potential.

-

Evaluation bias: The threshold for “passing” an assessment varies unintentionally by demographic group.

You think you’re being objective. The system thinks it’s being objective. But the result is discrimination.

Legal Exposure You Face

Regulatory frameworks are catching up fast. The European Union’s AI Act already restricts high-risk hiring systems. The Americans with Disabilities Act applies to your assessments if they disadvantage disabled candidates.

The real danger: disparate impact lawsuits. You don’t have to intend discrimination for it to be illegal. If your AI assessment systematically rejects qualified candidates from protected groups at higher rates, you’re exposed.

One bias audit with wrong findings can trigger legal action and damage your reputation in your talent market.

You need documentation showing you tested your system for bias before deployment. Most startups skip this. That’s your liability.

What You Must Do Right Now

Don’t assume your AI assessment vendor handled this. They have liability reasons to say it’s fair, but you have hiring responsibility.

Take these steps:

-

Audit your assessment data for demographic representation gaps

-

Test outcomes by demographic group to spot disparate impact

-

Document your bias testing thoroughly before going live

-

Monitor actual hiring outcomes after deployment

-

Establish a policy for algorithm transparency with candidates

Data privacy, intellectual property, and algorithmic fairness require institutional policies addressing accountability in AI-generated assessments to ensure responsible and equitable usage. This isn’t just compliance—it protects your company and your candidates.

Building Fairness Into Your Process

Transparency matters legally and practically. Candidates deserve to know they’re being evaluated by AI. They deserve to understand what’s being measured.

Fairness steps that actually work:

-

Diverse assessment review teams catch blind spots individual bias can’t

-

Regular audits comparing outcomes across demographic groups identify problems early

-

Clear communication with candidates about assessment criteria builds trust

-

Human review of edge cases prevents algorithmic decisions from going unquestioned

This takes effort. It’s worth it. One lawsuit costs more than months of proper bias auditing.

Pro tip: Before deploying any AI assessment, run a 30-day pilot with your current team’s actual hiring data, compare outcomes by demographic group, and document everything—this creates legal protection and reveals problems before they affect real candidates.

Mistakes to Avoid When Implementing AI Solutions

Many startups deploy AI hiring tools and wonder why results disappoint. They bought the right software but made mistakes in how they implemented it. These aren’t technical failures—they’re strategic ones.

The difference between AI success and AI failure usually comes down to planning and execution discipline. Here’s what actually goes wrong.

The Data Quality Problem

Garbage in, garbage out. Your AI assessment is only as good as the data training it. Most teams underestimate how much work data preparation requires.

Common implementation mistakes include ignoring data quality, underestimating system integration complexity, and over-relying on AI without human oversight. Before deploying, you need clean, representative data that actually reflects the job you’re hiring for.

Data quality failures look like:

-

Incomplete historical data: Missing information about candidates who didn’t work out reduces accuracy

-

Biased training sets: If your data skews toward one demographic, the AI learns that bias

-

Irrelevant metrics: Measuring factors that don’t correlate with actual job success wastes training data

-

Outdated information: Tech skills and market demands change; your training data gets stale fast

You can’t fix bad data with better algorithms. Spend time cleaning your dataset before you touch the AI system.

Over-Reliance on Automation

Here’s the trap: AI seems objective, so you start treating it as infallible. You skip human review because the system gave a score. That’s when mistakes multiply.

Critical implementation pitfalls include inadequate training and neglecting bias mitigation strategies without maintaining human involvement in decisions. AI should augment your hiring, not replace your judgment.

Your hiring managers still need to review borderline candidates. Edge cases still need human context. A perfect algorithm score doesn’t account for culture fit or unexplained gaps that might have good reasons.

The best AI hiring systems remove friction and provide better information—they don’t remove humans from decisions.

You’re still responsible for who gets hired. Act like it.

Strategic and Compliance Failures

Many teams deploy AI without alignment to actual business needs. You implemented a coding assessment when you actually needed a systems design assessment. Now your data is wrong from the start.

Avoid these mistakes:

-

Deploy without clear success metrics for your specific roles

-

Skip bias audits before going live with candidates

-

Ignore data protection requirements like GDPR or local employment law

-

Fail to communicate assessment criteria to candidates

-

Skip training your team on how to use the system correctly

-

Never audit results after deployment

The compliance piece matters legally. You need documentation showing you tested for bias, you protect candidate data, and you can explain your decisions if challenged.

This table compares common AI hiring pitfalls and how to address them:

| Pitfall | Risk Created | Prevention Tactic |

| Poor data quality | Inaccurate candidate scores | Audit and clean datasets |

| Over-automation | Missed human context | Include human review step |

| Compliance errors | Legal and reputational harm | Document testing and bias |

| Irrelevant assessments | Bad hiring decisions | Match tool to job needs |

| Lack of transparency | Candidate distrust | Share criteria openly |

Making Implementation Actually Work

Start small. Run pilots with your existing hiring data before expanding. Involve your hiring managers from day one—they know what success actually looks like in your roles.

Build these into your plan:

-

Clear alignment between assessment design and actual role requirements

-

Data audit and cleaning before deployment

-

Documented bias testing with results by demographic group

-

Regular audits of real hiring outcomes after launch

-

Training for your team on system usage and limitations

This takes time. It’s faster than cleaning up hiring discrimination lawsuits.

Pro tip: Document your implementation decisions and bias testing before deploying to any real candidates—this creates legal protection and lets you prove you acted responsibly if issues arise later.

Unlock the Power of AI-Driven Tech Hiring with Fuerza

The challenge of finding truly skilled tech talent is real and growing. Traditional hiring methods miss critical insights into actual candidate capabilities and often slow down your recruitment process. This article highlights how AI-powered skills assessments provide adaptive, unbiased, and real-time evaluations that reveal what candidates can actually do, not just what their resumes claim. If you want to avoid costly hiring mistakes and accelerate quality hires, embracing AI is not just smart but essential.

At Fuerza, we connect you with pre-vetted tech experts through a seamless AI-enhanced staffing experience. Our platform matches your hiring needs with Nearshore and Onshore resources ready to contribute from day one. Whether you are building your startup or scaling enterprise teams, our AI-backed approach frees you from manual screenings and guesswork while ensuring fairness and consistency throughout the process.

Don’t let slow or biased hiring hold your team back. Visit Fuerza today to discover how AI-powered staffing transforms your tech recruiting. Start hiring smarter, faster, and with confidence now.

Frequently Asked Questions

What is AI-powered skills assessment?

AI-powered skills assessment utilizes machine learning and natural language processing to evaluate candidates’ abilities more accurately and efficiently than traditional methods. It focuses on real performance data rather than just resumes.

How does AI enhance the hiring process?

AI enhances the hiring process by providing real-time feedback, customizing evaluation paths, and removing human bias, resulting in faster, fairer, and more precise hiring decisions.

What types of AI skills evaluation methods are available?

The main types of AI skills evaluation methods include automated assessment tools, personalized recommendation systems, adaptive tutoring systems, feedback generation systems, and predictive analytics, each serving different purposes in the hiring process.

What are the risks associated with using AI in hiring?

Risks include algorithmic bias stemming from flawed training data, legal exposure from potential discrimination, and reliance on automation without human oversight. Addressing these risks involves thorough audits and documentation of bias testing before implementation.